At GUADEC 2022 in Guadalajara I gave a talk, Paying technical debt in our accessibility infrastructure. This is a transcript for that talk.

The video for the talk starts at 2:25:06 and ends at 3:07:18; you can click on the image above and it will take you to the correct timestamp.

Hi there! I'm Federico Mena Quintero, pronouns he/him. I have been working on GNOME since its beginning. Over the years, our accessibility infrastructure has acquired a lot of technical debt, and I would like to help with that.

For people who come to GUADEC from richer countries, you may have noticed that the sidewalks here are pretty rubbish. This is a photo from one of the sidewalks in my town. The city government decided to install a bit of tactile paving, for use by blind people with canes. But as you can see, some of the tiles are already missing. The whole thing feels lacking in maintenance and unloved. This is a metaphor for the state of accessibility in many places, including GNOME.

This is a diagram of GNOME's accessibility infrastructure, which is also the one used on Linux at large, regardless of desktop. Even KDE and other desktop environments use "atspi", the Assistive Technology Service Provider Interface.

The diagram shows the user-visible stuff at the top, and the infrastructure at the bottom. In subsequent slides I'll explain what each component does. In the diagram I have grouped things in vertical bands like this:

-

gnome-shell, GTK3, Firefox, and LibreOffice ("old toolkits") all use atk and atk-adaptor, to talk via DBus, to at-spi-registryd and assistive technologies like screen readers.

-

More modern toolkits like GTK4, Qt5, and WebKit talk DBus directly instead of going through atk's intermediary layer.

-

Orca and Accerciser (and Dogtail, which is not in the diagram) are the counterpart to the applications; they are the assistive tech that is used to perceive applications. They use libatspi and pyatspi2 to talk DBus, and to keep a representation of the accessible objects in apps.

-

Odilia is a newcomer; it is a screen reader written in Rust, that talks DBus directly.

The diagram has red bands to show where context switches happen when applications and screen readers communicate. For example, whenever something happens in gnome-shell, there is a context switch to dbus-daemon, and another context switch to Orca. The accessibility protocol is very chatty, with a lot of going back and forth, so these context switches probably add up — but we don't have profiling information just yet.

There are many layers of glue in the accessibility stack: atk, atk-adaptor, libatspi, pyatspi2, and dbus-daemon are things that we could probably remove. We'll explore that soon.

Now, let's look at each component separately.

For simplicity, let's look just at the path of communication between gnome-shell and Orca. We'll have these components involved: gnome-shell, atk, atk-adaptor, dbus-daemon, libatspi, pyatspi2, and finally Orca.

Gnome-shell implements its own toolkit, St, which stands for "shell toolkit". It is made accessible by implementing the GObject interfaces in atk. To make a toolkit accessible means adding a way to extract information from it in a standard way; you don't want screen readers to have separate implementations for GTK, Qt, St, Firefox, etc. For every window, regardless of toolkit, you want to have a "list children" method. For every widget you want "get accessible name", so for a button it may tell you "OK button", and for an image it may tell you "thumbnail of file.jpg". For widgets that you can interact with, you want "list actions" and "run action X", so a button may present an "activate" action, and a check button may present a "toggle" action.

However, ATK is just abstract interfaces for the benefit of toolkits. We need a way to ship the information extracted from toolkits to assistive tech like screen readers. The atspi protocol is a set of DBus interfaces that an application must implement; atk-adaptor is an implementation of those DBus interfaces that works by calling atk's slightly different interfaces, which in turn are implemented by toolkits. Atk-adaptor also caches some things that it already asked to the toolkit, so it doesn't have to ask again unless the toolkit notifies about a change.

Does this seem like too much translation going on? It is! We will see the reasons behind that when we talk about how accessibility was implemented many years ago in GNOME.

So, atk-adaptor ships the information via the DBus daemon. What's on the other side? In the case of Orca it is libatspi, a hand-written binding to the DBus interfaces for accessibility. It also keeps an internal representation of the information that it got shipped from the toolkit. When Orca asks, "what's the name of this widget?", libatspi may already have that information cached. Of course, the first time it does that, it actually goes and asks the toolkit via DBus for that information.

But Orca is written in Python, and libatspi is a C library. Pyatspi2 is a Python binding for libatspi. Many years ago we didn't have an automatic way to create language bindings, so there is a hand-writtten "old API" implemented in terms of the "new API" that is auto-generated via GObject Introspection from libatspi.

Pyatspi2 also has a bit of logic which should probably not be there, but rather in Orca itself or in libatspi.

Finally we get to Orca. It is a screen reader written in 120,000 lines of Python; I was surprised to see how big it is! It uses the "old API" in pyatspi2.

Orca uses speech synthesis to read out loud the names of widgets, their available actions, and generally any information that widgets want to present to the user. It also implements hotkeys to navigate between elements in the user interface, or a "where am I" function that tells you where the current focus is in the widget hierarchy.

Sarah Mei tweeted "We think awful code is written by awful devs. But in reality, it's written by reasonable devs in awful circumstances."

What were those awful circumstances?

Here I want to show you some important events surrounding the infrastructure for development of GNOME.

We got a CVS server for revision control in 1997, and a Bugzilla bug tracker in 1998 when Netscape freed its source code.

Also around 1998, Tara Hernandez basically invented Continuous Integration while at Mozilla/Netscape, in the form of Tinderbox. It was a build server for Netscape Navigator in all its variations and platforms; they needed a way to ensure that the build was working on Windows, Mac, and about 7 flavors of Unix that still existed back then.

In 2001-2002, Sun Microsystems contributed the accessibility code for GNOME 2.0. See Emmanuele Bassi's talk from GUADEC 2020, "Archaeology of Accessibility" for a much more detailed description of that history (LWN article, talk video).

Sun Microsystems sold their products to big government customers, who often have requirements about accessibility in software. Sun's operating system for workstations used GNOME, so it needed to be accessible. They modeled the architecture of GNOME's accessibility code on what they already had working for Java's Swing toolkit. This is why GNOME's accessibility code is full of acronyms like atspi and atk, and vocabulary like adapters, interfaces, and factories.

Then in 2006, we moved from CVS to Subversion (svn).

Then in 2007, we get gtestutils, the unit testing framework in Glib. GNOME started in 1996; this means that for a full 11 years we did not have a standard infrastructure for writing tests!

Also, we did not have continuous integration nor continuous builds, nor reproducible environments in which to run those builds. Every developer was responsible for massaging their favorite distro into having the correct dependencies for compiling their programs, and running whatever manual tests they could on their code.

2008 comes and GNOME switches from svn to git.

In 2010-2011, Oracle acquires Sun Microsystems and fires all the people who were working on accessibility. GNOME ends up with approximately no one working on accessibility full-time, when it had about 10 people doing so before.

GNOME 3 happens, and the accessibility code has to be ported in emergency mode from CORBA to DBus.

GitHub appears in 2008, and Travis CI, probably the first generally-available CI infrastructure for free software, appears in 2011. GNOME of course is not developed there, but in its own self-hosted infrastructure (git and cgit back then, with no CI).

Jessie Frazelle invents usable containers 2013-2015 (Docker). Finally there is a non-onerous way of getting a reproducible environment set up. Before that, who had the expertise to use Yocto to set up a chroot? In my mind, that seemed like a thing people used only if they were working on embedded systems.

But it is until 2016 that rootless containers become available.

And it is only until 2018 that we get gitlab.gnome.org - a Git-based forge that makes it easy to contribute and review code, and have a continuous integration infrastructure. That's 21 years after GNOME started, and 16 years after accessibility first got implemented.

Before that, tooling is very primitive.

In 2015 I took over the maintainership of librsvg, and in 2016 I started porting it to Rust. A couple of years later, we got gitlab.gnome.org, and Jordan Petridis and myself added the initial CI. Years later, Dunja Lalic would make it awesome.

When I took over librsvg's maintainership, it had few tests which didn't really work, no CI, and no reproducible environment for compilation. The book by Michael Feathers, "Working effectively with legacy code" describes "legacy code is code without tests".

When I started working on accessibility at the beginning of this year, it had few tests which didn't really work, no CI, and no reproducible environment.

Right now, Yelp, our help system, has few tests which don't really work, no CI, and no reproducible environment.

Gnome-session right now has few tests which don't really work, no CI, and no reproducible environment.

I think you can start to see a pattern here...

This is a chart generated by the git-of-theseus tool. It shows how many lines of code got added each year, and how much of that code remained or got displaced over time.

For a project with constant maintenance, like GTK, you get a pattern like in the chart above: the number of lines of code increases steadily, and older code gradually diminishes as it is replaced by newer code.

For librsvg the picture is different. It was mostly unmaintained for a few years, so the code didn't change very much. But when it got gradually ported to Rust over the course of three or four years, what the chart shows is that all the old code shrinks to zero while new code replaces it completely. That new code has constant maintainenance, and it follows the same pattern as GTK's.

Orca is more or less the same as GTK, although with much slower replacement of old code. More accretion, less replacement. That big jump before 2012 is when it got ported from the old CORBA interfaces for accessibility to the new DBus ones.

This is an interesting chart for at-spi2-core. During the GNOME2 era, when accessibility was under constant maintenance, you can see the same "constant growth" pattern. Then there is a lot of removal and turmoil in 2009-2010 as DBus replaces CORBA, followed by quick growth early in the GNOME 3 era, and then just stagnation as the accessibility team disappeared.

How do we start fixing this?

The first thing is to add continuous integration infrastructure (CI). Basically, tell a robot to compile the code and run the test suite every time you "git push".

I copied the initial CI pipeline from libgweather, because Emmanuele Bassi had recently updated it there, and it was full of good toys for keeping C code under control: static analysis, address sanitizer, code coverage reports, documentation generation. It was also a CI pipeline for a Meson-based project; Emmanuele had also ported most of the accessibility modules to Meson while he was working for the GNOME Foundation. Having libgweather's CI scripts as a reference was really valuable.

Later, I replaced that hand-written setup for a base Fedora container image with Freedesktop CI templates, which are AWESOME. I copied that setup from librsvg, where Jordan Petridis had introduced it.

The CI pipeline for at-spi2core has five stages:

-

Build container images so that we can have a reproducible environment for compiling and running the tests.

-

Build the code and run the test suite.

-

Run static analysis, dynamic analysis, and get a test coverage report.

-

Generate the documentation.

-

Publish the documentation, and publish other things that end up as web pages.

Let's go through each stage in detail.

First we build a reproducible environment in which to compile the code and run the tests.

Using Freedesktop CI templates, we start with two base images for "empty distros", one for openSUSE (because that's what I use), and Fedora (because it provides a different build configuration).

CI templates are nice because they build the container, install the build dependencies, finalize the container image, and upload it to gitlab's container registry all in a single, automated step. The maintainer does not have to generate container images by hand in their own computer, nor upload them. The templates infrastructure is smart enough not to regenerate the images if they haven't changed between runs.

CI templates are very flexible. They can deal with containerized builds, or builds in virtual machines. They were developed by the libinput people, who need to test all sorts of varied configurations. Give them a try!

Basically, "meson setup", "meson compile", "meson install", "meson test", but with extra detail to account for the particular testing setup for the accessibility code.

One interesting thing is that for example, openSUSE uses dbus-daemon for the accessibility bus, which is different from Fedora, which uses dbus-broker instead.

The launcher for the accessibility bus thus has different code paths and configuration options for dbus-daemon vs. dbus-broker. We can test both configurations in the CI pipeline.

HELP WANTED: Unfortunately, the Fedora test job doesn't run the tests yet! This is because I haven't learned how to run that job in a VM instead of a container — dbus-broker for the session really wants to be launched by systemd, and it may just be easier to have a full systemd setup inside a VM rather than trying to run it inside a "normal" containerized job.

If you know how to work with VM jobs in Gitlab CI, we'd love a hand!

The third stage is thanks to the awesomeness of modern compilers. The low-level accessibility infrastructure is written in C, so we need all the help we can get from our tools!

We run static analysis to catch many bugs at compilation time. Uninitialized variables, trivial memory leaks, that sort of thing.

Also, address-sanitizer. C is a memory unsafe language, so catching pointer mishaps early is really important. Address-sanitizer doesn't catch everything, but it is better than nothing.

Finally, a test coverage job, to see which lines of code managed to get executed while running the test suite. We'll talk a lot more about code coverage in the following slides.

At least two jobs generate HTML and have to publish it: the documentation job, and the code coverage reports. So, we do that, and publish the result with Gitlab pages. This "static web hosting inside Gitlab", which makes things very easy.

Adding a CI pipeline is really powerful. You can automate all the things you want in there. This means that your whole arsenal of tools to keep code under control can run all the time, instead of only when you remember to run each tool individually, and without requiring each project member to bother with setting up the tools themselves.

The original accessibility code was written before we had a culture of ubiquitous unit tests. Refactoring the code to make it testable makes it a lot better!

In a way, it is rewarding to become the CI person for a project and learn how to make the robots do the boring stuff. It is very rewarding to see other project members start using the tools that you took care to set up for them, because then they don't have to do the same kind of setup.

It is also kind of a pain in the ass to keep the CI updated. But it's the same as keeping any other basic infrastructure running: you cannot think of going back to live without it.

Now let's talk about code coverage.

A code coverage report tells you which lines of code have been executed, and which ones haven't, after running your code. When you get a code coverage report while running the test suite, you see which code is actually exercised by the tests.

Getting to 100% test coverage is very hard, and that's not a useful goal - full coverage does not indicate the absence of bugs. However, knowing which code is not tested yet is very useful!

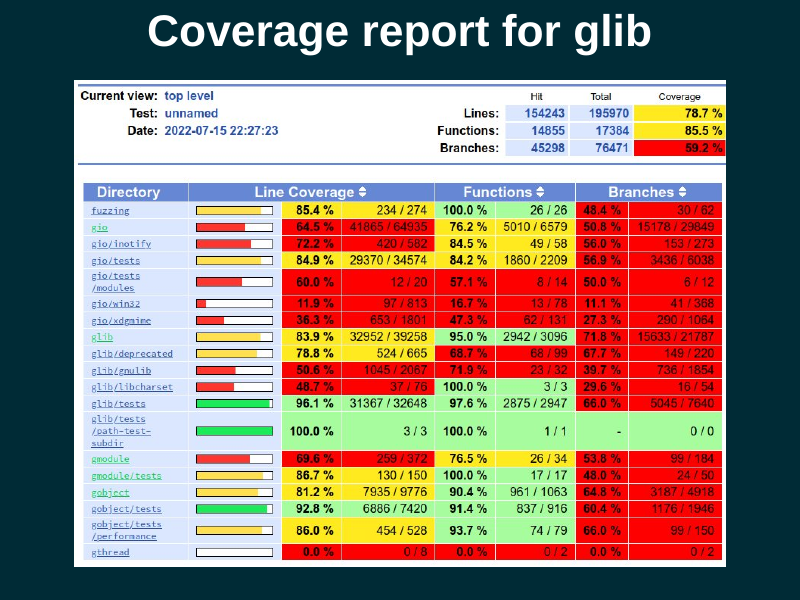

Code that didn't use to have a good test suite often has many code paths that are untested. You can see this in at-spi2-core. Each row in that toplevel report is for a directory in the source tree, and tells you the percentage of lines within the directory that are executed as a result of running the test suite. If you click on one row, you get taken to a list of files, from which you can then select an individual file to examine.

As you can see here, librsvg has more extensive test coverage. This is because over the last years, we have made sure that every code path gets exercised by the test suite. It's not at 100% yet (and there are bugs in the way we obtain coverage for the c_api, for example, which is why it shows up almost uncovered), but it's getting there.

My goal is to make at-spi2-core's tests equally comprehensive.

Both at-spi2-core and librsvg use Mozilla's grcov tool to generate the coverage reports. Grcov can consume coverage data from LLVM, GCC, and others, and combine them into a single report.

Glib is a much more complex library, and it uses lcov instead of grcov. Lcov is an older tool, not as pretty, but still quite functional (in particular, it is very good at displaying branch coverage).

This is what the coverage report looks for a single C file. Lines that were executed are in green; lines that were not executed are in red. Lines in white are not instrumented, because they produce no executable code.

The first column is the line number; the second column is the number of times each line got executed. The third column is of course the code itself.

In this extract, you can see that all the lines in the

impl_GetChildren() function got executed, but none of the lines in

impl_GetIndexInParent() got executed. We may need to write a test that

will cause the second function to get executed.

The accessibility code needs to process a bunch of properties in DBus objects. For example, the Python code at the top of the slide queries a set of properties, and compares them against their expected values.

At the bottom, there is the coverage report. The C code that handles each property is indeed executed, but the code for the error path, that handles an invalid property name, is not covered yet; it is color-coded red. Let's add a test for that!

So, we add another test, this time for the error path in the C code. Ask for the value of an unknown property, and assert that we get the correct DBus exception back.

With that test in place, the C code that handles that error case is covered, and we are all green.

What I am doing here is to characterize the behavior of the DBus API, that is, to mirror its current behavior in the tests because that is the "known good" behavior. Then I can start refactoring the code with confidence that I won't break it, because the tests will catch changes in behavior.

By now you may be familiar with how Gitlab displays diffs in merge requests.

One somewhat hidden nugget is that you can also ask it to display the code coverage for each line as part of the diff view. Gitlab can display the coverage color-coding as a narrow gutter within the diff view.

This lets you answer the question, "this code changed, does it get executed by a test?". It also lets you catch code that changed but that is not yet exercised by the test suite. Maybe you can ask the submitter to add a test for it, or it can give you a clue on how to improve your testing strategy.

The trick to enable that is to use the

artifacts:reports:coverage_report key in .gitlab-ci.yml. You have

your tools create a coverage report in Cobertura XML format, and you

give it to Gitlab as an artifact.

See the gitlab documentation on coverage reports for test coverage visualization.

When grcov outputs an HTML report, it creates something that looks and

feels like a <table>, but which is not an HTML table. It is just a

bunch of nested <div> elements with styles that make them look like

a table.

I was worried about how to make it possible for people who use screen readers to quickly navigate a coverage report. As a sighted person, I can just look at the color-coding, but a blind person has to navigate each source line until they find one that was executed zero times.

Eitan Isaacson kindly explained the basics of ARIA tags to me, and

suggested how to fix the bunch of <div> elements. First, give them

roles like table, row, cell. This tells the browser that the

elements are to be navigated and presented to accessibility tools as

if they were in fact a <table>.

Then, generate an aria-label for each cell where the report shows

the number of times a line of code was executed. For lines not

executed, sighted people can see that this cell is just blank, but has

color coding; for blind people the aria-label can be "no coverage"

or "zero" instead, so that they can perceive that information.

We need to make our development tools accessible, too!

You can see the pull request to make grcov's HTML more accessible.

Speaking of making development tools accessible, Mike Gorse found a bug in how Gitlab shows its project badges. All of them have an alt text of "Project badge", so for someone who uses a screen reader, it is impossible to tell whether the badge is for a build pipeline, or a coverage report, etc. This is as bad as an unlabelled image.

You can see the bug about this in gitlab.com.

One important detail: if you want code coverage information, your processes must exit cleanly!!!. If they die with a signal (SIGTERM, SIGSEGV, etc.), then no coverage information will be written for them and it will look as if your code got executed zero times.

This is because gcc and clang's runtime library writes out the

coverage info during program termination. If your program dies before

main() exits, the runtime library won't have a chance to write the

coverage report.

During a normal user session, the lifetime of the accessibilty daemons (at-spi-bus-launcher and at-spi-registryd) is controlled by the session manager.

However, while running those daemons inside the test suite, there is no user session! The daemons would get killed when the tests terminate, so they wouldn't write out their coverage information.

I learned to use Martin Pitt's python-dbusmock to write a minimal mock of gnome-session's DBus interfaces. With this, the daemons think that they are in fact connected to the session manager, and can be told by the mock to exit appropriately. Boom, code coverage.

I want to stress how awesome python-dbusmock is. It took me 80 lines of Python to mock the necessary interfaces from gnome-session, which is pretty great, and can be reused by other projects that need to test session-related stuff.

I am using pytest to write tests for the accessibility interfaces via DBus. Using DBus from Python is really pleasant.

For those tests, a test fixture is "an accessibility registry daemon tied to the session manager". This uses a "session manager fixture". I made the session manager fixture tear itself down by informing all session clients of a session Logout. This causes the daemons to exit cleanly, to get coverage information.

The setup for the session manager fixture is very simple; it just

connects to the session bus and acquires the

org.gnome.SessionManager name there.

Then we yield mock_session. This makes the fixture present itself to whatever needs to call it.

When the yield comes back, we do the teardown stage. Here we just

tell all session clients to terminate, by invoking the Logout method

on the org.gnome.SessionManager interface. The mock session manager

sends the appropriate singals to connected clients, and the clients

(the daemons) terminate cleanly.

I'm amazed at how smoothly this works in pytest.

The C code for accessibility was written by hand, before the time when we had code generators to implement DBus interfaces easily. It is extremely verbose and error-prone; it uses the old libdbus directly and has to piece out every argument to a DBus call by hand.

This code is really hard to maintain. How do we fix it?

What I am doing is to split out the DBus implementations:

- First get all the arguments from DBus - marshaling goo.

- Then, the actual logic that uses those arguments' values.

- Last, construct the DBus result - marshaling goo.

If you know refactoring terminology, I "extract a function" with the actual logic and leave the marshalling code in place. The idea is to do that for the whole code, and then replace the DBus gunk with auto-generated code as much a possible.

Along the way, I am writing a test for every DBus method and property that the code handles. This will give me safety when the time comes to replace the marshaling code with auto-generated stuff.

We need reproducible environments to build and test our code. It is not acceptable to say "works on my machine" anymore; you need to be able to reproduce things as much as possible.

Code coverage for tests is really useful! You can do many tricks with it. I am using it to improve the comprehensiveness of the test suite, to learn which code gets executed with various actions on the DBus interfaces, and as an exploratory tool in general while I learn how the accessibility code really works.

Automated builds on every push, with tests, serve us to keep the code from breaking.

Continuous integration is generally available if we choose to use it. Ask for help if your project needs CI! It can be overwhelming to add it the first time.

Let the robots do the boring work. Constructing environments reproducibly, building the code and running the tests, analyzing the code and extracting statistics from it — doing that is grunt work, and a computer should do it, not you.

"The Not Rocket Science Rule of Software Engineering" is to automatically maintain a repository of code that always passes all the tests. That, with monotonically increasing test coverage, lets you change things with confidence. The rule is described eloquently by Graydon Hoare, the original author of Rust and Monotone.

There is tooling to enforce this rule. For GitHub there is Homu; for Gitlab we use Marge-bot. You can ask the GNOME sysadmins if you would like to turn it on for your project. Librsvg and GStreamer use it very productively. I hope we can start using Marge-bot for the accessibility repositories soon.

The moral of the story is that we can make things better. We have much better tooling than we had in the early 2000s or 2010s. We can fix things and improve the basic infrastructure for our personal computing.

You may have noticed that I didn't talk much about accessibility. I talked mostly about preparing things to be able to work productively on learning the accessibility code and then improving it. That's the stage I'm at right now! I learn code by refactoring it, and all the CI stuff is to help me refactor with confidence. I hope you find some of these tools useful, too.

(That is a photo of me and my dog, Mozzarello.)

I want to thank the people that have kept the accessibility code functioning over the years, even after the rest of their team disappeared: Joanmarie Diggs, Mike Gorse, Samuel Thibault, Emmanuele Bassi.